The Flume agent consist of three elements: 1) Source, 2) Channel, and 3) Sink.įigure 4: Cloudera Source – Kafka as a Flume Source This integrated capability is available for Cloudera Distribution including Apache Hadoop (CDH) 5.2, as well as with Hortonworks Data Platform (HDP) 2.4, as well as the regular open source Apache Hadoop ecosystem.īy using Kafka sink, Flume can publish messages to multiple Kafka topics. The two applications have been integrated. Flume-Kafka Integrationįortunately, writing code for Kafka is not extremely complicated (more than 15 languages are supported, including the more popular, Java, Scala, and Python), and things have been made even simpler with the integration of Flume and its code base for integration with Kafka. Part of Kafka’s simplicity comes from its lack of dependency on other applications (other than Apache Zookeeper), and, as a result, this means the responsibility for writing producers and consumers falls on the developer. Simplicity is usually a good thing, but in some cases it means that the complexities are left to the user.

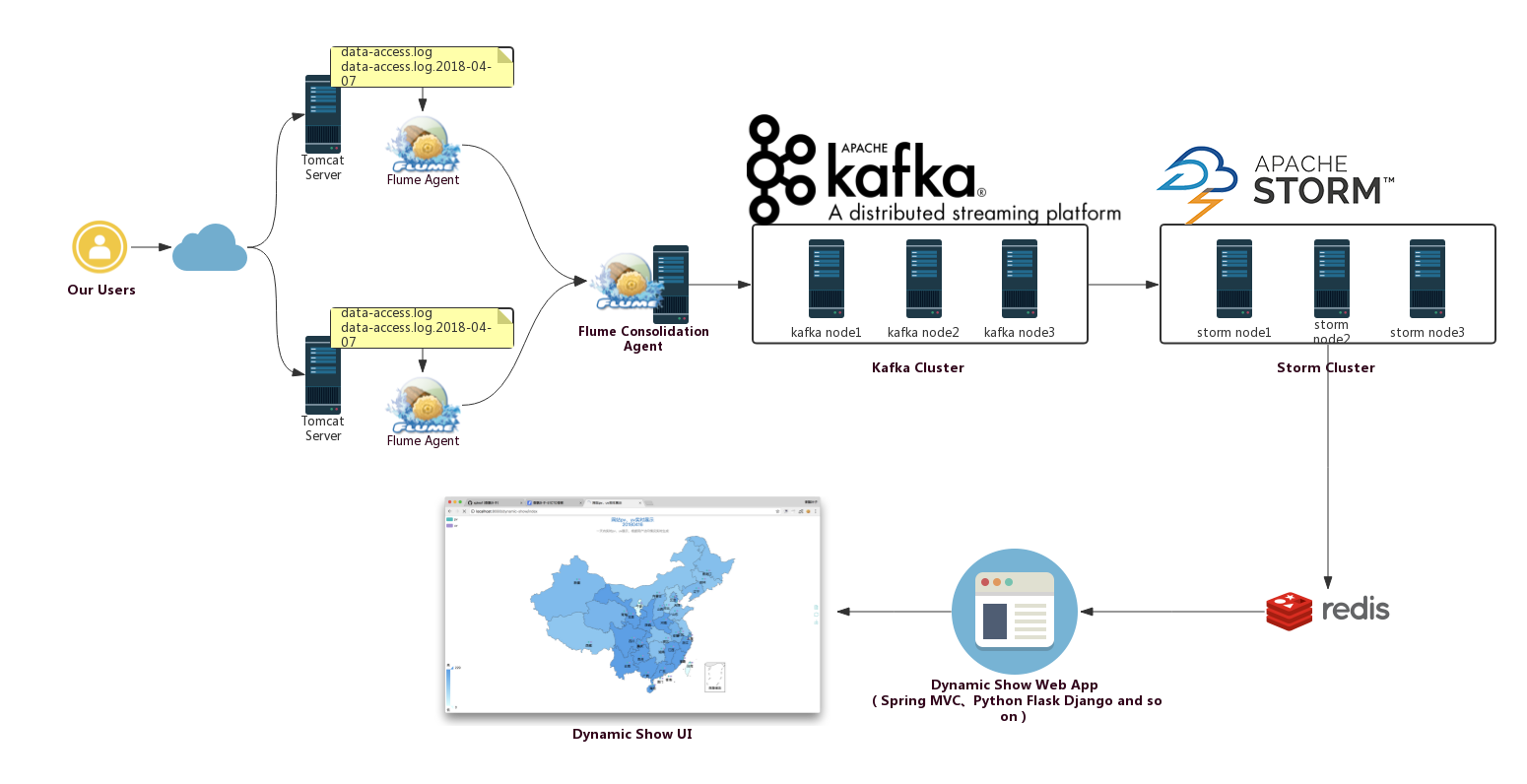

Kafka is extremely simple to setup and configure. Kafka and Flume have similar, and sometimes overlapping capabilities, with the exception of Kafka’s functional simplicity, and messaging capabilities, as well as Kafka providing more durability due to its replication it syncs data across multiple disks. The available sources, channels, and sinks are listed in Figure 1: The architecture is flexible, and fault tolerant with many tuning and failover mechanisms. The data can also be transformed, augmented, filtered, and aggregated during the ingest process as well. Additionally, there can be multiple sources, and these built-in mechanisms allow a lot of work to be done without writing a single line of code. Flume provides sinks into the Hadoop ecosystem like, Hbase, Solr Index, HDFS, and Kafka to name a few. Without inundating you with technical jargon, Apache Flume is a distributed service that is very efficient at collecting and moving large amounts of data into Hadoop (e.g., click-stream data, security log files, and application data). In this post Flafka, the unofficial name for integrating Flume as a producer for Kafka, is presented as another possible big data solution for data silos. Traditional approaches for solving the data silo problem can cost millions of dollars (even for a moderately sized company), and typically requires a huge effort in integration work (e.g., data modeling, system engineering, software design, and development). From the previous post on “ Poor Data Management Practices“, the discussion ended with a high level approach to one possible solution for data silos.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed